The New Priesthood of Artificial Intelligence

By Matt Stone

We are told artificial intelligence is just a tool.

That is how the language always works when power is trying to slip past scrutiny. It is just a tool. Just an efficiency measure. Just an aid to decision-making. Just a better way to sort information. Just a system that helps institutions work faster and smarter.

That story is convenient. It is also incomplete.

AI is not just helping institutions make decisions. It is increasingly becoming part of the infrastructure through which decisions happen at all. It is shaping what gets noticed, what gets flagged, who gets ranked as risky, who gets delayed, who gets denied, and who gets pushed forward without ever knowing a machine helped determine the path. The real issue is not simply that AI can be biased or opaque, though it can be both. The deeper issue is that AI is becoming a new mediator between human beings and the institutions that govern their lives.

That word matters: mediator.

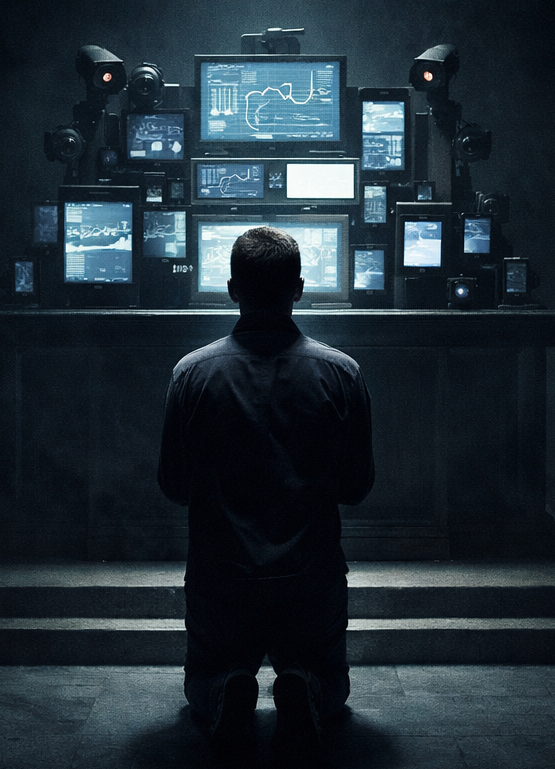

A mediator is not just a messenger. A mediator shapes the relationship between a person and authority. It decides what can be seen, what counts as relevant, what gets interpreted as danger, and what gets dismissed as noise. Priests once mediated between the person and God. Bureaucracies later mediated between the person and the state. Credit bureaus mediated between the person and economic life. Now AI is beginning to mediate all three at once: moral judgment, administrative judgment, and economic judgment, all under the language of neutral systems.

That should bother people more than it does.

Most Americans already know something is off, even if they do not have the words for it. You feel it when a bank suddenly decides you are a risk. You feel it when an algorithmic system rates a neighborhood, flags a transaction, raises an insurance premium, or quietly sorts your application into a pile you will never see. You feel it when institutions act on you before they explain themselves to you. That is the new environment. The machine is not just producing information. It is reorganizing the conditions under which institutions see you in the first place.

And once that happens, the old reassurance starts to fall apart.

People love to say there is still a human in the loop. Fine. But what does that really mean if the human being is looking at a screen already framed by the machine’s categories, alerts, risk scores, and recommendations? What kind of freedom is left when the person doing the reviewing inherits the machine’s assumptions before they ever encounter you as a full human being? A human in the loop is not much comfort if the loop itself has already been built by automated judgment.

That is where the danger becomes harder to see.

The problem is not always dramatic. It does not have to look like killer robots or some Hollywood apocalypse. In fact, the most dangerous form of AI authority is usually much duller than that. It looks like workflow. It looks like triage. It looks like efficiency. It looks like a faster way to process thousands of cases, applications, benefits claims, background checks, fraud alerts, and credit decisions. It looks boring, which is exactly why it is dangerous. Boring systems can still ruin people.

That is especially true in credit scoring.

Credit scores already function as a kind of secular judgment. They follow people through ordinary life and shape what is available to them. They influence borrowing, housing, employment, pricing, and the general atmosphere of suspicion or trust that institutions bring to your name. A low score is not just a number. It can become a social script. It can alter the cost of living, the possibility of mobility, and the kind of future a person is allowed to imagine. Now bring AI into that world and the process gets even harder to challenge. The system becomes faster, more adaptive, more granular, and more difficult for ordinary people to understand. It can absorb more data, infer more patterns, and move more quickly from interpretation to consequence.

That speed is not just a technical feature. It is a political and moral problem.

Institutions love speed because speed looks like competence. But when the capacity to classify people expands faster than the capacity to correct mistakes, speed becomes a weapon. That is the real issue. AI narrows the time between being interpreted and being acted upon. It compresses the gap between judgment and consequence. By the time a person realizes what has happened, the denial has already gone through, the flag has already been raised, the review has already been routed, the burden has already shifted onto them to prove they are not what the system inferred.

That gap matters more than most policy talk admits.

A society remains at least partly legitimate when people have a meaningful chance to understand, contest, and delay the consequences of judgment. When that interval shrinks, legitimacy starts to rot. A system can be highly efficient and still be unjust. It can be technically impressive and still be morally insane. In fact, technical sophistication often becomes the mask that lets institutions act more aggressively while sounding more reasonable.

This is one reason social media matters to the AI story.

Social media platforms normalized a world built on constant feedback loops. Stimulus, response, reinforcement. Notification, reaction, anticipation. People were trained to live inside systems that watched behavior, learned from it, and adjusted the next prompt accordingly. The point was not just to show users content. The point was to keep them in a loop. AI takes that logic and moves it deeper into institutional life. What began as engagement architecture is becoming governance architecture. The same adaptive logic that once kept people scrolling can now help determine who gets investigated, who gets priced as risky, who gets treated as suspicious, and who gets left fighting a machine-shaped judgment after the fact.

So no, this is not just about gadgets or software or some abstract debate among policy nerds.

It is about the slow construction of a new priesthood. Not priests in robes, but systems no one elected and few people understand, standing between the individual and the powers that shape their life. These systems promise objectivity while absorbing the values of the institutions that build and deploy them. They promise neutrality while redistributing burden downward. They promise efficiency while shrinking the space in which ordinary people can push back.

And the worst part is that this kind of power often feels impersonal, which makes it harder to resist. If a human being looks you in the eye and denies you something, anger has a target. If a machine-shaped institution does it through a chain of automated judgments, the accountability blurs. You get a number. A notice. A silent ranking. A bureaucratic shrug. Good luck finding the altar where the sacrifice was approved.

That is why people need to stop talking about AI as though it were merely a tool.

It is becoming a mediator of modern life. It helps institutions decide what kind of person you are, what kind of risk you represent, and how quickly consequences should arrive. It does not replace human authority. It reorganizes it. It hardens it. It speeds it up and hides it inside systems that look natural once they have been around long enough.

That is the trick. Power survives by becoming ordinary.

The question now is not whether AI will be used by institutions. That part is already over. The question is whether people will accept a world in which judgment arrives faster than explanation, classification carries more weight than reality, and the systems shaping their lives remain largely invisible until the damage is done.

That is not innovation. That is a new theology of control. One we are only just beginning to realize already has us in its grasp.

Member discussion